The VeeamON 2024 agenda is live!

Browse sessions and secure your conference pass before Early Rate registration expires.

- Veeam Data Platform

- Recovery Orchestration

Don’t Bet Your Business on Manual Recovery

Remove the guesswork with tested, orchestrated recovery

- Automate low-impact testing to verify recovery

- Orchestrate recovery, tailored to your needs

- Prove compliance with dynamic test reports

- Recovery Orchestration

- Benefits

- Workloads

- Capabilities

- How it Works

- Packaging Options

- Resources

Orchestrate Radical Resilience

Add Confidence to Your Recovery Plan

Automate Low-Impact Testing to Verify Recovery

Bounce back strong with automated testing to highlight potential impacts.

Orchestrated Recovery, Tailored to Your Needs

Handle multiple plans with hundreds of machines all at once.

Prove Compliance With Dynamic Test Reports

Automatically create reports after each test to show how well you met your recovery goals.

Recovery Is Your Last Line of Defense

Clean Recovery With a Single Click

NEW

Seamless Recovery

Restore clean data by continuously scanning for ransomware and identifying the latest clean restore point.

NEW

Shine the Spotlight on Malware

Highlight threats, assess risks and measure your environment’s security score in the Veeam Threat Center.

NEW

Planned Failover Without Disruption

Verified and carefully orchestrated recovery for times when you need to strategize and execute failover without disrupting the critical workloads that drive your business.

NEW

Automated Cloud Recovery Validation

Get your time back by automating manual post-recovery tasks when recovering an on-premises workload into Microsoft Azure.

Enterprise Integration

Achieve a perfect fit into your environment using APIs and run custom scripts during testing and execution.

Recovery to the Cloud

With Orchestrated Direct Restore to Microsoft Azure, your business gains extra resilience through cloud-based disaster recovery.

Automated Testing

Conduct non-disruptive disaster recovery tests, scheduled on on-demand, to make sure your desired RTOs and RPOs are achievable.

Dynamic Documentation

Generate and create disaster recovery documentation automatically to prove readiness and compliance.

One-Click Recovery

Effortlessly recover individual applications or entire sites from any location with just one click from the web interface.

Secure Role-Based Access

Delegate and control secure access for application owners and operations teams.

Application Verification

Confirm that your common enterprise applications are functioning correctly post-recovery.

Instant Test Lab

Use your backups and replicas for patch testing without impacting production.

Veeam Data Platform

Be resilient in a world of cyberthreats

- Detect and identify cyberthreats

- Respond and recover faster from ransomware

- Secure and compliant protection for your data

Stay Confident in the Face of Disaster

Veeam Threat Center

Globally understand your overall protection status.

Orchestrate to the Cloud

Planned, tested and automated direct restore to Microsoft Azure.

Plan your Recovery

Automate and sequence recovery actions.

Prove Your Recovery

Automatically produce and update detailed documentation.

Unlock Enterprise-Grade Protection

Available in three comprehensive enterprise-grade editions— with our most powerful premium option bringing you the complete, secure protection that can only be achieved with Veeam Data Platform.

Veeam Data Platform

Foundation

- New Inline Malware Detection

- New SIEM integration via syslog

- New Security & Compliance Analyzer

Advanced

All features of Foundation plus

- Real‑time reporting, analysis and issue remediation

- New Veeam Threat Center

- New ServiceNow integration

Premium

All features of Advanced plus

- Compliant and tested recovery, tailored to your needs

- New DR to cloud with automated network quarantine

- New Recovery plan testing with malware scanning

Incident Recovery ServicesVeeam Cyber Secure is an elite program with protection at every stage of a cyber incident.

Veeam-Powered BaaS and DRaaS

Looking for a managed service? Access Veeam-powered BaaS & DRaaS delivered by Veeam partners for:

- Managed BaaS and off-site backup

- DRaaS

- BaaS for Microsoft 365

- BaaS for Public Cloud

Stay Updated on Recovery Orchestration Trends

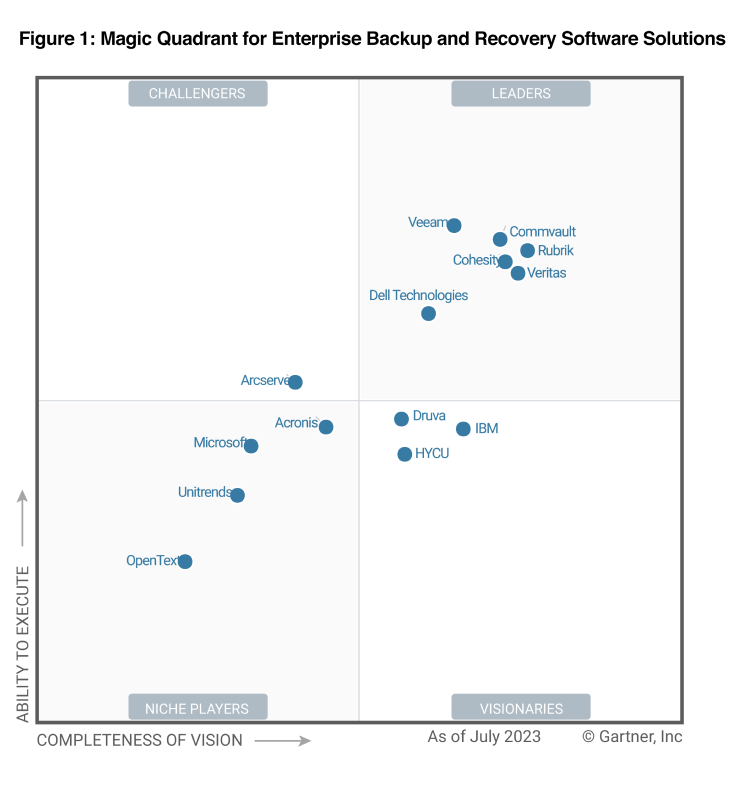

2023 Gartner® Magic Quadrant™

Veeam positioned highest for Ability to Execute for the 4th consecutive time and a Leader for the 7th time.

2022 Modern Data Protection: Definitive Guide to Veeam

Learn about the business objectives of 3,400 IT experts and decision-makers and how Veeam can help.

Webinar: 20 Things Your Disaster Recovery Plan is Missing

Our Veeam experts discuss DR as a journey from end to end.

Definitive Guide to Veeam

Data has become the most valuable currency in today’s economy. Protecting your data is the first step in effectively managing and maximizing its potential.

FAQs

What is a disaster recovery solution?

Why is a DR solution important?

What is the difference between data backup and disaster recovery solutions?

How does Veeam’s recovery orchestration solution work?

What is the recovery time objective (RTO) and recovery point objective (RPO) of Veeam Recovery Orchestrator?

What types of disasters can Veeam Recovery Orchestrator handle?

How can I create and test my disaster recovery plan?

Where should I backup my data for disaster recovery?

What are the best methods for disaster recovery?

Can a disaster recovery product work in hybrid or multi-cloud environments?

Can Veeam Recovery Orchestrator be integrated with existing backup solutions?

How does Veeam Recovery Orchestrator compare to other DR solutions?

Radical Resilience Starts Here

hybrid cloud and the confidence you need for long-term success.