Though virtualization has become the new normal for businesses of all sizes, physical server infrastructures are still quite widespread. Do you think that the companies using traditional, physical server networks are wasting money? This post will show you why this is exactly the case! But before reading I suggest to watch this educative video.

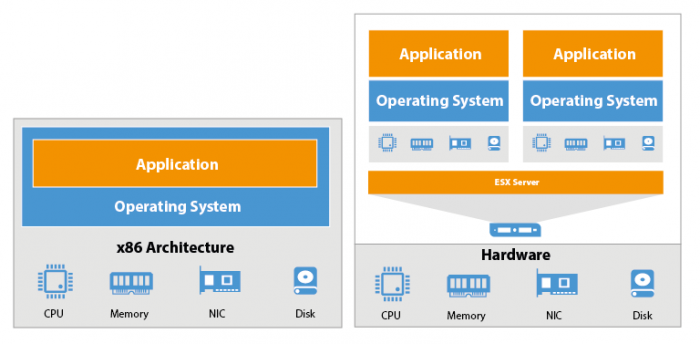

Let’s start with what we used to have when physical servers were the normal platform of choice. The architecture of a physical server is quite plain. Each server has its own hardware: Memory, network, processing and storage resources. On this hardware, the server operating system is loaded. From the OS you can then run the applications. Pretty straight-forward.

With a virtual infrastructure, you have the same physical server with all the resources, but instead of the server operating system, there’s a hypervisor such as vSphere or Hyper-V loaded on it. The hypervisor is where you actually create your virtual machines. As you can see on the diagram, each VM has its own virtual devices – virtual CPU, virtual memory, virtual network interface cards and its own virtual disk. On top of this virtual hardware you load a guest operating system and then your traditional server applications.

The benefits of virtualization are obvious: Instead of having just one application per server, you can now run several guest Operating Systems and a handful of applications with the same physical hardware. That’s right, virtualization can bring you so much more for your money!

Hardware Independence and VM portability

So what enables a virtual machine to be portable across physical machines running the same hypervisor? As said, every virtual machine has its own virtual hardware. So the guest operating system loaded on a VM is only aware of this hardware configuration and not the physical server’s. In other words, a VM is completely hardware independent. It means that the operation system installed on a VM is no longer tied up to certain hardware and you can easily move virtual machines from one physical server to another or even to another data center!

This makes the VMs absolutely portable! You can copy it to a flash drive, you can bring it home and replicate it at your home lab, you can give it to a friend or send it to your clients! You can even replicate a virtual machine across WAN or across the Internet!

More goodies of virtual machines

We talked about one of the key features that virtualization provides which is virtual machine portability that is possible thanks to hardware independence. It allows you to easily migrate a VM to any place you like: You can back it up and restore on another server, you can put it on your flash drive and run it on your home lab or workstation, or you can even take the VM to another site! But that’s not all! There’s a lot of useful features created by the hardware independence and VM portability:

- vMotion is a VMware technology providing VM portability and hardware independence that allows a running VM to migrate from one server to another with no downtime for the end user!

- Distributed Resource Scheduler or DRS. This VMware technology enables balancing virtual infrastructure in the aspect of resource consumption. DRS can move a running VM from one host to another (by means of vMotion) in order to give it all the resources it needs to operate effectively.

- VMware High Availability or VMHA is an option that allows you to restore VMs from a failed server to another so that you’ll get it back running in no time.

- Distributed Power Management or DPM is another great VMware feature that can help you reduce your company’s energy bill! With this feature, you can easily bring power consumption by the infrastructure under control. DPM consolidates VMs on fewer physical servers when resource consumption across the virtual infrastructure is low. All the servers that are not needed will be turned off in the meantime.

- Virtualization makes disaster recovery way simpler as well. Thanks to the hardware independence, once a VM inside your virtual infrastructure fails, you can just run your backed up VMs on any server because the guest OSs are no longer tied up to particular hardware.

And this is just the tip of the iceberg! Virtual machines have got much more great features that the traditional physical server-based infrastructure lacks. But in order to make maximum use of that great new functionality brought by virtualization, you should use the right tools for monitoring, managing and, of course, for data protection. Since virtual machines differ markedly from physical servers, the tools designed for the latter are no good for the former. It counts in particular for the backup.

Few materials for those who still need great physical solutions from Veeam:

- Blog post: Veeam FastSCP for physical servers? You bet

- Another post on Veeam Blog, Announcing of free desktop and laptop backup tool

- Whitepaper: Cisco & Veeam Help Customers Achieve Digital Transformation